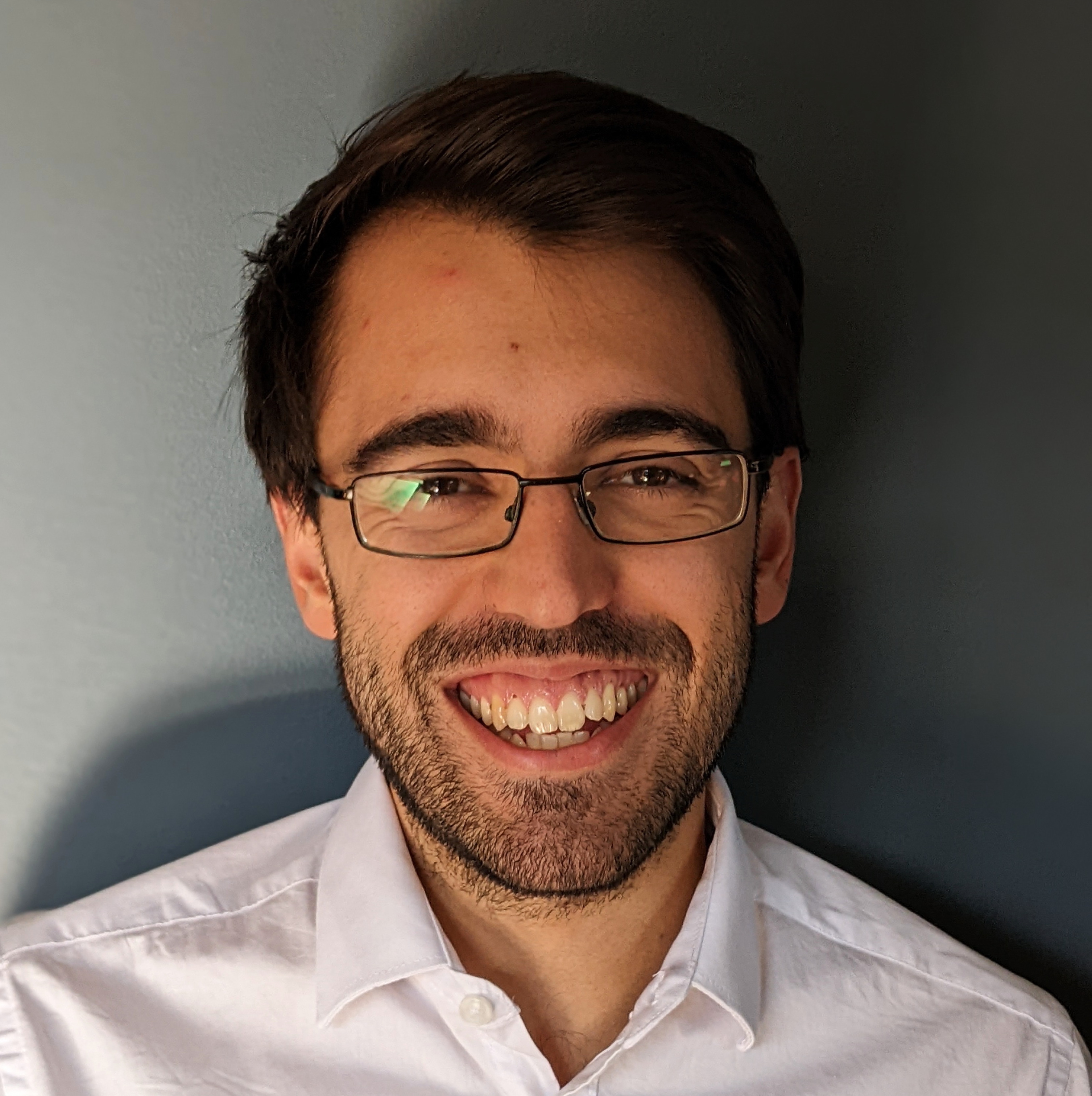

Andrea Lodi

Mixed-Integer Programming: 65 years of history and the Artificial Intelligence challenge

Abstract: Mixed-Integer Programming (MIP) technology is used daily to solve (discrete) optimization problems in contexts as diverse as energy, transportation, logistics, telecommunications, biology, just to mention a few. The MIP roots date back to 1958 with the seminal work by Ralph Gomory on cutting plane generation. In this talk, we will discuss — taking the (biased) viewpoint of the speaker — how MIP evolved in its main algorithmic ingredients, namely preprocessing, branching, cutting planes and primal heuristics, to become a mature research field whose advances rapidly translate into professional, widely available software tools. We will then discuss the next phase of this process, where Artificial Intelligence and, specifically, Machine Learning are already playing a significant role, a role that is likely to increase.

When: 19 April 2024, 11am (EST)

Where: Pavillon André-Aisenstadt (Université de Montréal), 4th floor, GERAD Conference Room(4488)